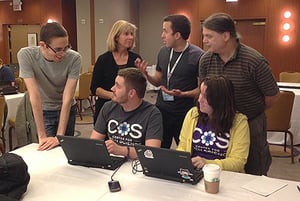

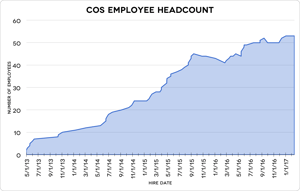

This March marks the 4th anniversary of launching the Center for Open Science. As a nonprofit technology and culture change company, we aim to increase openness, integrity, and reproducibility of research. What started as a small project is now a team of more than 50 employees; we have a suite of free, open products and services to support researchers, journals, funders, institutions, and societies; and we have established dozens of collaborations with stakeholders across disciplines and stakeholder communities.

This March marks the 4th anniversary of launching the Center for Open Science. As a nonprofit technology and culture change company, we aim to increase openness, integrity, and reproducibility of research. What started as a small project is now a team of more than 50 employees; we have a suite of free, open products and services to support researchers, journals, funders, institutions, and societies; and we have established dozens of collaborations with stakeholders across disciplines and stakeholder communities.

Some specific achievements include more than 4800 journals signing the transparency and openness promotion (TOP) guidelines. The Open Science Framework has a rapidly accelerating user base (45,000, adding >150 per day) and is hosting more than 86,000 projects and 9,700 registrations. With the community's help, we have built OSF interfaces for preprints, institutional repositories, and meetings that can be branded for community groups to facilitate self-governance and shared infrastructure simultaneously.

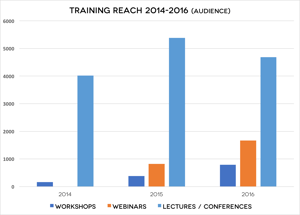

Our training and open research consultants are presenting hundreds of strategy and educational programs each year all over the globe. Hundreds of visitors have come to COS including funders, service providers, and institutional officials from around the world to explore collaboration or learn how to implement open science products and processes in their communities.

But, really, we’re just getting started. This month we released our new Strategic Plan detailing our plans to transition to scaling the products and services that we offer. There is so much to do to achieve openness of content, data, and workflow across research domains. Our first four years have set us up to turn the corner and demonstrate rapid adoption of open practices across communities.

So what’s next? Our big picture goals are ambitious:

All scholarly content is preserved, connected, and versioned to foster discovery, accumulation of evidence, and respect for uncertainty.

Scholarly service providers are incentivized to compete on quality of service and maximizing transparency of process and content.

Institutions evaluate researchers based on both the content of their discoveries and the process by which they were discovered.

Funders have full insight into the activity and outcomes of their research investments to more efficiently achieve their mission and guide future investments.

Researchers prioritize getting it right over getting it published, and receive credit for scholarly contributions beyond the research article such as generating useful data or authoring code that can be reused by others.

Reviewers provide feedback at all stages of the research lifecycle and openness introduces potential for credit and reputation enhancement for reviewing.

Librarians apply curation and data management expertise throughout the research lifecycle, not just retrospectively.

Consumers have easy access to the evidence supporting scholarly claims.

All stakeholders are included and respected in the research lifecycle.

If any of these describe you, we want to help you and we need your help! Everything COS builds is free and open-source. Without your support our core philosophy to build public goods infrastructure would be impossible. Help us keep the promise of open, transparent and reproducible science moving forward by making a generous donation today. Thank you for your support!

Thanks!

Regards,

It is impossible to overstate how important the generosity of our sponsors and partners has been, especially the Laura and John Arnold Foundation, who provided the startup grant responsible for launching the Center for Open Science.

It is impossible to overstate how important the generosity of our sponsors and partners has been, especially the Laura and John Arnold Foundation, who provided the startup grant responsible for launching the Center for Open Science.COS began as two laboratory projects with lots of ideas and a minimal budget. LJAF’s support fundamentally changed the trajectory of our work, enabling us to convert goals into action. In these first four years, we have been able to participate as a contributor to the open science movement across many disciplines and stakeholder communities. Our open source products and services are in the wild. Our long-term investment in The Open Science Framework is starting to have an impact as more and more research communities adopt it to manage their research workflow and access to knowledge. We also added connective support for many of the major research tools on the market so that researchers could use the OSF without impacting their current workflow.

From the start, we wanted to help build a future in which the process, content, and outcomes of research are openly accessible by default. All scholarly content would be preserved and connected and transparency would stand as an aspirational good for scholarly work. All stakeholders would be included and respected in the research lifecycle and share the pursuit of truth as the primary incentive and motivation for scholarship.

Culture change requires simultaneous movement by funders, institutions, researchers, and service providers across national and disciplinary boundaries. The vision is achievable because openness, integrity, and reproducibility are shared values, the technological capacity is available, and sustainable business models that promote openness exist.

Encouraging change in this area is challenging, but COS has approached the challenge head-on with a strategy that addresses the key levers--building a community of like-minded researchers and advisors, the infrastructure to support the behavior, the training to enact it, and the incentives to change it.

Though we are a nonprofit, we are in a competitive, commercial landscape and we operate as such. We hire the top talent, pay competitively, and maintain a fast and flexible pace. We are also committed to the open source philosophy, inviting any interested party to take on projects to enhance our infrastructure for the benefit of the community. We are fortunate to have a start-up financial runway that has allowed us to create a stable platform to build on.

People Power

People PowerFor the first three years, the staff size nearly doubled each year, and COS supported summer teams of 30-40 interns. With this rapid growth trajectory we tested many different technical and strategic options for pursuing our mission. The large majority of the team made significant improvements to the Open Science Framework, including support for filtered views of content, major hardware system migrations, development of a version 2 API, security and authentication enhancements, a full workflow process to support registrations, and a metadata database model called JamDB.

On infrastructure personnel and process side, improvements included the establishment and growth of our Quality Assurance (QA), Product, and DevOps teams, refinement of modular development processes, and a redesign of our deployment processes for faster release and recovery. All of these improvements were made with a mind towards future scalability and sustainability, and made later product and service decisions possible.

COS’ metascience projects were a major part of the mission during this period. COS has orchestrated major reproducibility projects such as the Reproducibility Project: Psychology, Reproducibility Project: Cancer Biology, and the Many Labs and Many Analysts projects. The completion and publication of the Reproducibility Project: Psychology in 2015 was a particular highlight. A total of 270 co-authors completed 100 replications, produced completely open and reproducible documentation of the project on the OSF, and published the findings in Science. We prepared for substantial attention, but were still overwhelmed by it. The report was covered in well over 100 major media outlets, and today it maintains Altmetric ranking as the 52nd most discussed of all 7.2 million research outputs they track. With greater than 800 academic citations already, it is one of the most cited 2015 publications across all of science.

These metascience projects shed light on the difficulty of replicating research -- in many cases we discovered that replicating labs worked with incomplete research methods details, found it difficult to procure original research materials, or otherwise had difficulty uncovering all of the necessary information to complete a quality replication. We can improve this by providing, better technology options, training on how to conduct replicable science, and by fixing incentive problems in the system.

Some of the most important work we do is to nudge research incentives toward openness and reproducibility. We work with institutions on updating their policies and procedures to acknowledge and reward open and reproducible practices in hiring, promotion, and tenure. We help funders establish policies promoting openness and use evidence of that behavior in evaluation for future funding. We cooperate with journals and publishers that adopt policies for open practices, offer credit for behaving openly, and introduce new publishing models that reward accuracy.

The published journal article is still the primary method of getting research out there, but not all results get published. As with many other forms of publishing, novel and positive research results are considered more attractive than replications and negative outcomes, even though they may be less useful. This creates incentives to avoid or ignore replications and negative results, even at the expense of accuracy. The consequence? Replications and negative results are rare in the published literature.

So we partnered with Dr. Chris Chambers to promote the Registered Reports publishing model for journals, which addresses these dysfunctional incentives. Registered Reports have two key features: they focus on project quality and design, offering peer review in advance of knowing the outcomes of the research, and commit to accept/reject decisions that are independent of the research outcomes.

Journals can also offer badges acknowledging open practices to authors who are willing and able to meet criteria to earn the badge. These simple badges signal that the journal values transparency, let authors signal that they have met transparency standards for their research, and provide an immediate signal of accessible data, materials, or preregistration to readers. Badges allow adopting journals to take a low-risk, resource-lite policy change toward increased transparency. Initial evidence suggests that badges are highly effective. The first adopting journal, Psychological Science, had 3% of published articles reporting open data in 2012-2013 before adopting badges. One year after adopting badges, 39% of published articles reported open data, and there was no change in sharing rates among comparison journals.

Journals can also offer badges acknowledging open practices to authors who are willing and able to meet criteria to earn the badge. These simple badges signal that the journal values transparency, let authors signal that they have met transparency standards for their research, and provide an immediate signal of accessible data, materials, or preregistration to readers. Badges allow adopting journals to take a low-risk, resource-lite policy change toward increased transparency. Initial evidence suggests that badges are highly effective. The first adopting journal, Psychological Science, had 3% of published articles reporting open data in 2012-2013 before adopting badges. One year after adopting badges, 39% of published articles reported open data, and there was no change in sharing rates among comparison journals.

The training program has grown beyond a single consultant providing ad hoc workshop sessions. We improved our initial training format in the first year based on participant feedback, learning from observing other methods, and workshop data. Our current training sessions follow a more interactive, hands-on format and have moved to a sustainable train-the-trainer model that extends the impact to researchers who may not have access.

Since cultural change requires a decentralized, networked approach, our training team travels throughout the world to provide on-site training. Over the first three years, we held training sessions at over 50 U.S. institutions. To further facilitate this network of information sharing, we also developed a training curriculum available for free on the OSF, which is regularly used by the community and COS Ambassadors.

After establishing an initial set of core products and a user base over the first several years, we shifted focus towards forming strategic partnerships that facilitate scaling. During 2014, 2015, and 2016, we formed partnerships with leading organizations across a variety of sectors, with particular attention to those eager to transform the publishing landscape and integrate research workflows. This yielded partnerships on publishing efforts and institutional integrations. We have also sought opportunities to work with funders, both as funders of our efforts and as users of our products and services. A key example was a Funder Forum held in December, 2016 in partnership with the Health Research Alliance (HRA). HRA and COS hosted over 70 individuals representing more than 40 research funding organizations. This approach rapidly expanded COS’s ability to introduce strategies that promote open science to a broader national and international audience.

In addition the development of our products and services, we have also refined our community engagement strategies. The COS Community Team quickly engages any interested person or community with an appropriate action step they can take to increase openness and transparency. From the individual researcher preregistering, posting a preprint, or using the OSF, to the research institution that can promote the OSF with OSF Institutions, workshops, signing TOP, or setting up a branded preprint service. This has led to faster stakeholder feedback loops, and better product development processes. Despite some specialization, everyone maintains high awareness of all products and services, and all team members are prepared to represent COS initiatives at community events across the globe. Beyond the U.S., COS regularly participates in events in Europe and the U.K., and has presented in South Africa, Kenya, and Mexico. We sustain steady growth in audience engagement.

.png?width=300&name=preregistration_challenge.original%20(1).png)

TOP Guidelines and Prereg Challenge

TOP Guidelines and Prereg ChallengeEven though researchers are adopting practices and tools as they are able, changing incentives needs buy-in from major stakeholders--journals, societies, and funders. The Transparency and Openness Promotion (TOP) Guidelines have been a successful demonstration of this approach. Drafted during a meeting of stakeholders hosted by COS in 2014 and fully implemented in 2015, TOP includes eight modular standards, each with three levels of increasing stringency. Adopters select which of the eight transparency standards are relevant for their community, and select a level of stringency of implementation for the selected standards. We maintain the TOP Guidelines for the community and facilitate promotion and adoption. Currently, there are over 750 journal and organizational signatories, and about 50 journals have adopted policies explicitly based on or influenced by TOP.

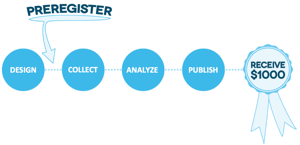

Preregistration is an unfamiliar practice in many areas of science, but adds credibility to research by deciding in advance what will be tested before data is collected. The Preregistration Challenge is a way to promote our values while incentivizing new or uncertain researchers to try preregistration. We will give 1,000 awards of $1,000 each to promote education and experience with preregistration.

Here's where we stand as of March 2017!

.png?width=600&name=numbers-8-18.original%20(1).png)

Funders: Laura and John Arnold Foundation, The Alfred P. Sloan Foundation, Wellspring Advisors, National Institutes of Health, The John Templeton Foundation, Institute of Museum and Library Services, The Hewlett Foundation, National Science Foundation, Defense Advanced Research Projects Agency, The Doris Duke Charitable Foundation, Swedish Foundation for Humanities and Social Sciences.

Subawardees: Open Knowledge Foundation, University of California - Davis, University of San Diego, Association of Psychological Science.

Top Signatories: Acta Psychologica, Addgene, Addiction, AIS Transactions on Replication Research, American Academy of Neurology (4 journals), American Association for the Advancement of Science (AAAS) (4 journals), American Geophysical Union (AGU) (19 journals), American Heart Association (AHA) (12 journals), American Journal of Botany, American Journal of Community Psychology, American Journal of Political Science, American Journal of Public Health, American Meteorological Society (12 journals), American Society for Cell Biology (ASCB) (2 journals), Applications in Plant Sciences, Asian Journal of Social Psychology, Association for Psychological Science (APS) (3 journals), Autism Research, Behavioral Ecology, Behavioral Science & Policy, Bio-protocol, BioMed Central (BMC) (307 journals), Biotropica, Brain and Behavior, British Journal of Surgery, Canadian Journal of Experimental Psychology, Cognition and Emotion, Cognitive Psychology, Cognitive Science, Collabra, Comprehensive Results in Social Psychology, Conservation Biology, Cortex, Cultural Diversity & Ethnic Minority Psychology, Developmental Psychobiology, Developmental Science, Ecological Society of America (6 journals), Ecology and Evolution, Ecology Letters, eLife, Emerging Adulthood, European Journal of Neuroscience, European Journal of Personality, European Political Science, European Union Politics, Evolution, Evolution and Human Behavior, Experimental Psychology, F1000Research, FAIRDOM e.V., Frontiers (50 journals), HardwareX, Health and Environmental Sciences Institute (HESI), Hippocampus, Human Computation, Human Performance, Instructional Science, International Interactions, International Journal Human-Computer Studies (IJHCS), International Journal of Primatology, International Journal of Selection and Assessment, Italian Political Science Review, Journal of Adolescence, Journal of Business and Psychology, Journal of Cell Biology, Journal of Cognitive Psychology, Journal of Conflict Resolution, Journal of Consumer Psychology, Journal of Consumer Research, Journal of Evolutionary Biology, Journal of Experimental Social Psychology, Journal of Liberty and International Affairs, Journal of Mathematical Psychology, Journal of Media Psychology, Journal of Memory and Language, Journal of Neurochemistry, Journal of Organizational Behavior, Journal of Personality Assessment, Journal of Research in Personality, Journal of Social and Personal Relationships, Journal of Social Psychology, Judgment and Decision Making, Language Learning, MDPI AG (161 journals), Medical Decision Making, Mind and Life Institute, Nicotine & Tobacco Research, Oikos, Organizational Behavior and Human Decision Processes, Organizational Research Methods, Palgrave Communications, Party Politics, PeerJ (2 journals), Pensoft Publishers (15 journals), Personal Relationships, PLOS (4 journals), Political Behavior, Political Science Research and Methods, Psi Chi Journal of Psychological Research, Psychology of Sport and Exercise, Psychonomic Society (7 journals), Public Administration Review, Quarterly Journal of Political Science, Research and Politics, Resource Identification Initative, Sciencematters, Social Cognition, Social Psychology, Sociological Methods and Research, Sociological Science, State Politics and Policy Quarterly, Stress and Health, Survey Research Methods, Systematic Botany, The American Naturalist, The Auk: Ornithological Advances, The Royal Society (7 journals), Ubiquity Press (29 journals), Wellcome Open Research, Work, Aging, and Retirement.

Registered Reports Adopters: AIMS Neuroscience, American Journal of Political Science, American Political Science Review, American Politics Research, Attention, Perception, and Psychophysics, Cognition and Emotion, Cognitive Research: Principles and Implications, Comparative Political Studies, Comprehensive Results in Social Psychology, Cortex, Drug and Alcohol Dependence, eLife, European Journal of Neuroscience, Experimental Psychology, Frontiers in Cognition, Human Movement Science, Infancy, International Journal of Psychophysiology, Journal of Accounting Research, Journal of Business and Psychology, Journal of Cognitive Enhancement, Journal of European Psychology Students, Journal of Experimental Political Science, Journal of Media Psychology, Journal of Personnel Psychology, Judgment and Decision Making, Leadership Quarterly, Management and Organization Review, Nature Human Behaviour, NFS Journal, Nicotine & Tobacco Research, Perspectives on Psychological Science, Political Analysis, Political Behavior, Political Science Research and Methods, Public Opinion Quarterly, Review of Financial Studies, Royal Society Open Science, Animal Behavior and Cognition, Behavioral Neuroscience, Memory, Social Psychology, State Politics and Policy Quarterly, Stress and Health, Work, Aging, and Retirement.

Badge Adopters: Studies in Second Language Acquisition, Psychological Science, European Journal of Personality, International Journal of Primatology, Internet Archaeology, American Journal of Political Science, Language Learning, Journal of Social Psychology, Clinical Psychological Science, Journal of Research in Personality, Social Psychology, AIS Transactions on Replication Research, Psi Chi Journal of Psychological Research, and Canadian Journal of Experimental Psychology.

OSF Institution Partners: University of Southern California, The Lab @ DC, University of Washington, University of Notre Dame, John Hopkins University, University of California - San Diego, University of California - Riverside, University of Virginia, Busara Center for Behavioral Economics, Mind & Life Institute, New York University, Oklahoma State University, University of Colorado - Boulder, Laura and John Arnold Foundation, University of Cape Town, Virginia Commonwealth University, and Federation of Earth Science Information Partners (ESIP).

Conference Co-Organizers: American Association for the Advancement of Science, Association of Research Libraries, Health Research Alliance, Society for Improving Psychological Science, Whitman College.

Preprint Service Partners: AgriXiv- Open Access India, Berkeley Initiative for Transparency in Social Sciences (BITSS), Chinese Academy of Social Sciences, EngrXiv, Focused Ultrasound Foundation, MindArXiv, PaleoArXiv, PsyArXiv, Scientific Electronic Library Online (SciELO), SocArXiv.

Registry Service Partners: American Economic Association, American Political Science Association, Evidence in Governance and Politics, International Initiative for Impact Evaluation.

.png?width=600&name=timeline6.original%20(1).png)

Center for Open Science

210 Ridge McIntire Road

Suite 500

Charlottesville, VA 22903-5083

Email: contact@cos.io

Current positions are here.

COS has consistently earned a Guidestar rating of Platinum for its financial transparency, the highest rating available. You can see our profile on Guidestar here.

We invite all of our sponsors, partners, and members of the community to learn more about how our organization operates, our impact, our financial performance, and our nonprofit status.

Unless otherwise noted, this site is licensed under a Creative Commons Attribution 4.0 International License.

All images and logos are licensed under Creative Commons Attribution-NoDerivatives 4.0 International License.

Terms of Use | Privacy Policy